Traditional Data Center vs AI Data Center: What’s the Real Difference?

If you’ve been following the tech world lately, you’ve probably noticed one phrase showing up everywhere: AI Data Center. It’s on the news, in infrastructure investment reports, and at the center of billion-dollar announcements from companies like Microsoft, Google, and Reliance Industries.

But here’s a question that most articles skip over: What actually makes an AI data center different from a traditional one? Furthermore, are companies just rebranding old infrastructure with a fancy new name? Or is there a genuinely meaningful difference in how these facilities work?

First, Let’s Understand What a Traditional Data Center Does

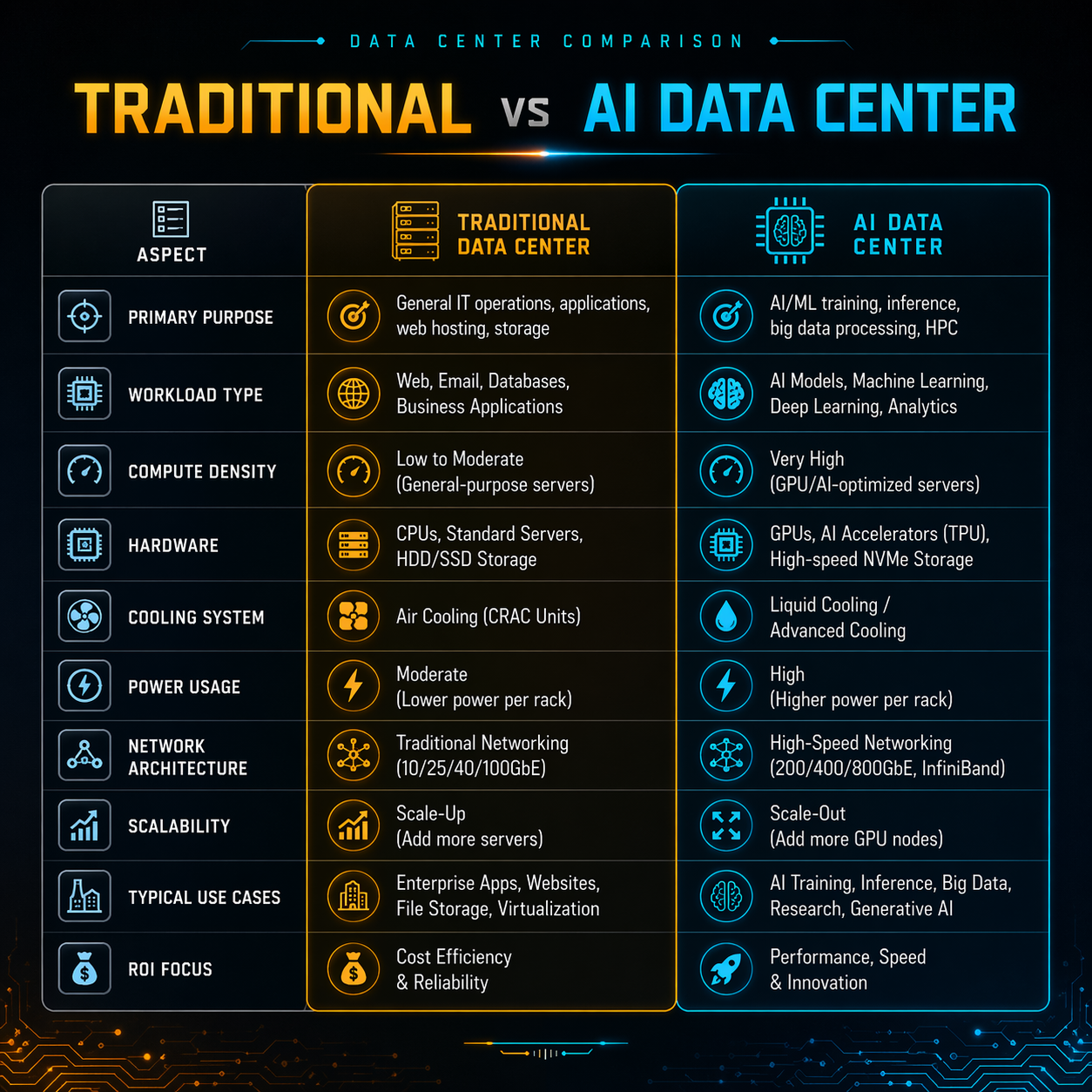

A traditional data center is essentially a large, climate-controlled building packed with servers, storage systems, and networking equipment. Its job is to store data, run applications, host websites, and process everyday business workloads.

Think of it like a very powerful, very organized filing cabinet. For example, it handles your banking app, your company’s email server, or an e-commerce website’s product catalogue.

These facilities rely on CPU-centric computing. CPUs handle sequential tasks one after another in an orderly fashion. As a result, traditional data centers work well for:

- General-purpose workloads like web hosting, databases, and ERP systems

- Moderate, predictable power consumption

- Standard air-based cooling systems

- High availability and uptime for business applications

So, What Is an AI Data Center Really?

Simply put, it is a purpose-built infrastructure facility. Specifically, engineers design it to support artificial intelligence workloads — things like training large language models (LLMs), running real-time inference, processing computer vision tasks, and handling massive parallel computations.

The fundamental difference isn’t just about having “more servers.” Instead, it reflects a completely different philosophy of computing.

Traditional data centers rely on CPUs. In contrast, AI data centers are built around GPUs (Graphics Processing Units) and custom AI accelerators like NVIDIA H100s, Google’s TPUs, or AWS Trainium chips. These processors handle thousands of operations simultaneously. Consequently, they are exactly what training an AI model requires.

The 5 Real Differences You Should Know About

1. Computing Architecture

In a traditional data center, CPU racks dominate the floor space. In an AI data center, however, GPU clusters take center stage. A single AI training rack houses 8 to 16 high-performance GPUs. Moreover, these connect with ultra-fast networking like NVIDIA’s NVLink or InfiniBand to function as one massive computing unit.

2. Power Consumption — The Biggest Shock

This is where the real gap becomes visible.

A standard server rack in a traditional data center consumes roughly 5 to 15 kilowatts (kW) of power. An AI GPU rack, on the other hand, easily draws 60 to 120 kW. Furthermore, next-generation racks are pushing beyond 200 kW.

3. Cooling: From Air to Liquid

Traditional data centers cool themselves with air. Big HVAC systems push chilled air through raised floors and hot/cold aisle setups. This works well when racks consume moderate power.

However, at 100 kW+ per rack, air simply can’t remove heat fast enough. Therefore, AI data centers increasingly turn to liquid cooling. For instance, direct liquid cooling (DLC) sends coolant directly to the chip via cold plates. Alternatively, immersion cooling submerges entire server boards in a non-conductive liquid bath.

4. Network Infrastructure

AI workloads are notorious for their hunger for data movement. For example, when training a model across hundreds of GPUs, those processors must communicate constantly and at very high speed. Additionally, latency that works fine in a traditional business app becomes catastrophic in an AI training job.

As a result, AI data centers use ultra-high-bandwidth, ultra-low-latency fabrics — think 400G or 800G networking. These use spine-leaf architectures that optimize east-west traffic (GPU to GPU) rather than north-south traffic (client to server).

5. Storage Requirements

Traditional data centers handle structured data in well-organized databases. AI workloads, however, demand access to enormous, unstructured datasets terabytes and petabytes of text, images, video, and sensor data. Specifically, these datasets need extremely high read and write throughput.

Why This Matters for India Specifically

India is at a genuinely exciting inflection point. In fact, the country is one of the fastest-growing markets for data center investment globally. Moreover, the AI wave is accelerating that growth dramatically.

The data centers in india sector have seen announcements worth tens of billions of dollars in the last 18 months alone. For example, these include Adani’s Green Data Center initiative, Microsoft’s $3 billion AI infrastructure investment, and Google’s expanding footprint in Mumbai and Pune.

However, not all of this investment is equal. A significant portion specifically targets AI data center capabilities, not just traditional hosting and colocation.

For Indian enterprises, this means:

- Cloud providers will increasingly offer GPU-based compute from Indian soil, reducing latency

- Compliance-conscious industries like BFSI and healthcare can run sensitive AI models without data leaving the country

- Startups and researchers will have more accessible, locally available AI compute infrastructure

Should Your Business Care About the Distinction?

Honestly? Yes, and here’s why.

If you procure colocation space, cloud infrastructure, or managed services, understanding this difference helps you make smarter decisions. For instance, if your workloads are standard databases, web apps, ERP, and backup storage, a traditional data center serves you just fine. In that case, you’d be overpaying for AI-grade infrastructure.

Final Thoughts

The difference between a traditional data center and an AI data center isn’t cosmetic. In fact, it runs through every layer of the infrastructure stack from chip architecture and power systems to cooling design, networking fabric, and storage strategy.

As AI becomes central to how businesses compete and grow, this distinction will only become more important. Indeed, India’s rapidly maturing data center ecosystem positions it well as a significant global player. Nevertheless, this will only happen if the right investments go into truly AI-native facilities rather than legacy infrastructure dressed up with a new label.